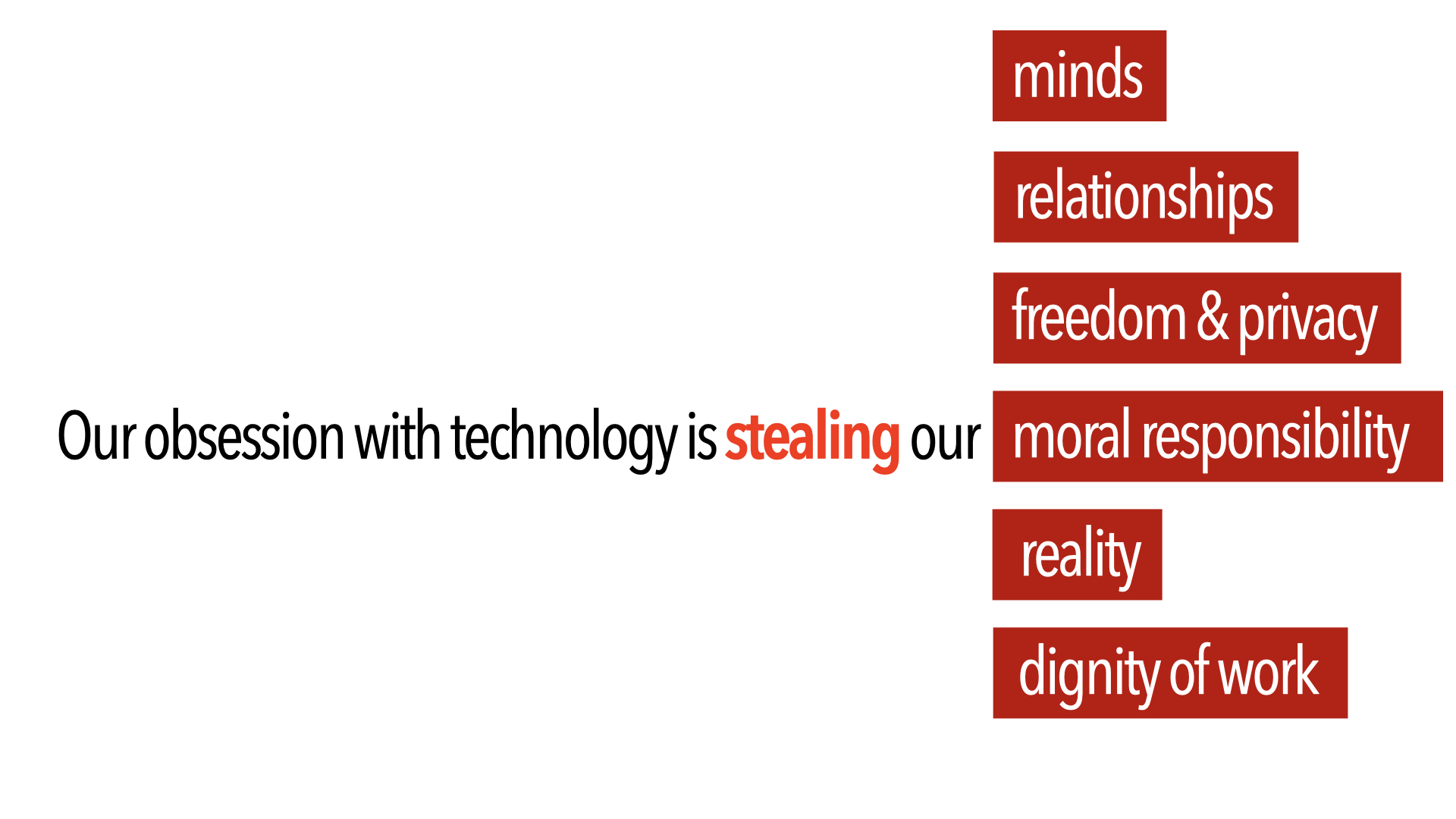

Artificial Intelligence (AI) pervades much of our lives. We use facial recognition to open our phones, and the state uses it to track us – so what’s the problem? Decisions are made using machine learning on our private and personal data, from shopping habits to medical history –have we lost control? We order our digital world in conversation with chatbots – how convenient, but is it changing our relationships with people? The prospect of a self-driving vehicle lies just around the corner – should we care that it might run over a child to save the passenger’s life?

Short answers to questions that you may have about AI

Why has AI became so wide spread?

How is AI already impacting us?

What are the ethical issues behind the use of AI?

How does AI simulate human characteristics?

What are some of the moral dangers of using an AI?

What is the singularity and how does it relate to AI?

How does the Christian view of humanity challenge the idea of an AI singularity?

How does a Christian view of humanity challenge AI?

How can human responsibility survive the spread of AI?

Talks on Artificial Intelligence.

TWR Interview October 2025

AI & the dignity of work

Jeremy talks to Transworld Radio UK about AI and work following the Church of England’s call for a national conversation on the impact of artificial intelligence on the world of work, they warn that rapid technological change raises profound questions.

ACT Conference September 2025

AI and Education

This keynote talk was given at the Association of Christian Teachers annual conference in London.

The talk explores the whether the use of Generative AI in education is helping students and teachers develop virtues like wisdom, knowledge, integrity and love or whether it is nudging towards vices like sloth and avarice avarice.

SGS STEM Careers Open Day, March 2025

AI and STEM Careers - Impact and ethical challenges

This keynote talk was given at the SGS Academy in Bristol to a group of students at a STEM careers open day. It covers what AI is and isn’t, applications of AI in STEM career areas and the ethical questions posed by AI in some of these work areas.

Affinity Symposium London 2 October 2024

AI in Christian Ministry - Biblical Principles for Evaluating AI Use

In this talk, Jeremy Peckham explored a Christian anthropology and our calling to imitate Christ and live virtuously, showing how this theology can form a basis for navigating our engagement with AI.

The live streamed symposium was hosted by Affinity and explored the opportunities and risks of using AI in Christian ministry.

Royal Society of Arts (RSA) 1 October 2023

A Harms and Governance Framework for Trustworthy AI

This short 5 minute talk was given to the AI Special Interest Group of the Royal Society for the encouragement of the Arts, Manufacturers and Commerce, October 2023.

It provides a very brief overview of a Harms and Governance Framework that I have been developing for the development and use of responsible or trustworthy AI. It is based on 6 specific potential harms to humanity from some application uses of AI.

Webinar broadcast by Prodocens Media - Romania 23 February 2021

Masters or Slaves: AI and the Future of Civilisation

This webinar was given via zoom to members of the Romanian Academics Network organised by Emanuel Tundrea, at Emanuel University, Oradea, Romania. It was broadcast live to the general public by Prodocens Media, a Romanian broadcaster and watched by over 3,ooo people. It subsequently has been watched 2,100 times.

European Leadership Forum May 2019

Masters or Slaves: AI and the Future of Civilisation

Jeremy Peckham spent much of his career in the field of Artificial Intelligence, and latterly, as a businessman and entrepreneur. In this presentation, he looks at the ethical issues surrounding the current and future capabilities of AI, particularly in relation to its widespread uptake across many fields such as medicine, finance, justice, family care, transport, and security and by organs of State. He concludes by formulating a Christian worldview response to these challenges.

Masters or Slaves? AI and the Future of Civilisation – audio version.

Workshop Series

IFES Graduate Impact meeting in Berlin November 2018

each talk is around 20 minutes

Ethical Issues

Part 1 introduces the main ethical issues surrounding current AI technology from a Christian worldview perspective including how we use AI, the technical challenges, such as bias and hacking and the impact on personhood and jobs.

An overview of the three main ethics paradigms, deontological, teleological and virtue ethics is presented, along with the consequences of mainstream utilitarian (teleological) ethics for AI issues. The ethical dilemmas in programming self driving vehicles are discussed together with the consequences of assigning moral agency and rights to machines. The talk concludes with a review of what organisations such as the IEEE and the EU are thinking about AI ethics and design standards.

Part 2 looks at the key drivers of AI ethics such as assigning moral agency, autonomy, perception of equality and surveillance in the context of our increasing reliance on machines and their cumulative impact on humanity and civilisation.

The talk considers how to develop biblically based AI ethics in the light of these developments by considering what it means to be human and created in God’s image. The ontological, functional and relational views of what imago dei means are presented and how they individually or together underscore that humans are special and created by God.

With this foundation, our relationship to AI is discussed and the talk concludes with some key questions that we need to ask about AI or indeed any technology, in terms of it’s impact on humanity created in God’s image.

Part 3 concludes the series of talks on AI ethics with 9 propositions that are suggested could form the basis of a biblically based response to AI especially in the light of our understanding of Imago Dei. These propositions cover issues from where responsibility should ultimately lie to assignment of rights, how AI should be deployed and the importance of people always knowing they are interacting with an artefact rather than a human.

The talk concludes by considering the dangers of idolatry and seeking to become God in the quest for super intelligence. The sting in the tail in the un questioned adoption and use of AI is that ultimately we could become slaves rather than masters!

Technology and Applications

Part 1 illustrates some of the areas in which AI technology is being used and highlights the current race for AI dominance amongst countries, especially China as well as the significant increase in funding for AI in recent years.

Whilst AI in now in widespread use, commentators such as Elon Musk have highlighted that “AI is a fundamental risk to the existence of human civilisation”. We consider this question by first looking at three broad definitions of AI capabilities, “Narrow AI”, where systems perform narrow tasks as well or better than a human, “Strong AI” where AI performs comparably with a human on a range of tasks and “Super Intelligence, where AI surpasses human capabilities. The idea of Trans-humanism is also explored. This talk concludes by explaining in broad terms how “Narrow AI” works and details what simulated humanness is.

Part 2 provides a road map of where AI is heading and discusses the technology behind AI, how it works and why AI has become more prolific in the last two decades. The dangers and threats from miss use of AI are discussed alongside the limitations in the underlying algorithms and how they leave AI vulnerable to miss use.

Part 3 investigates the likelihood of Narrow AI advancing to provide the capabilities of General AI and beyond. The varying views of scientists and others are considered and the fundamental challenges that need to be addressed, such as understanding consciousness and technology limitations, are investigated. Simulated humanness is described in more detail and illustrated by current progress in avatars and androids. The ethical problems that simulated humanness raises are then highlighted.

Published Articles

IEEE Computer Magazine, March 2024

An AI Harms and Governance Framework for Trustworthy AI.